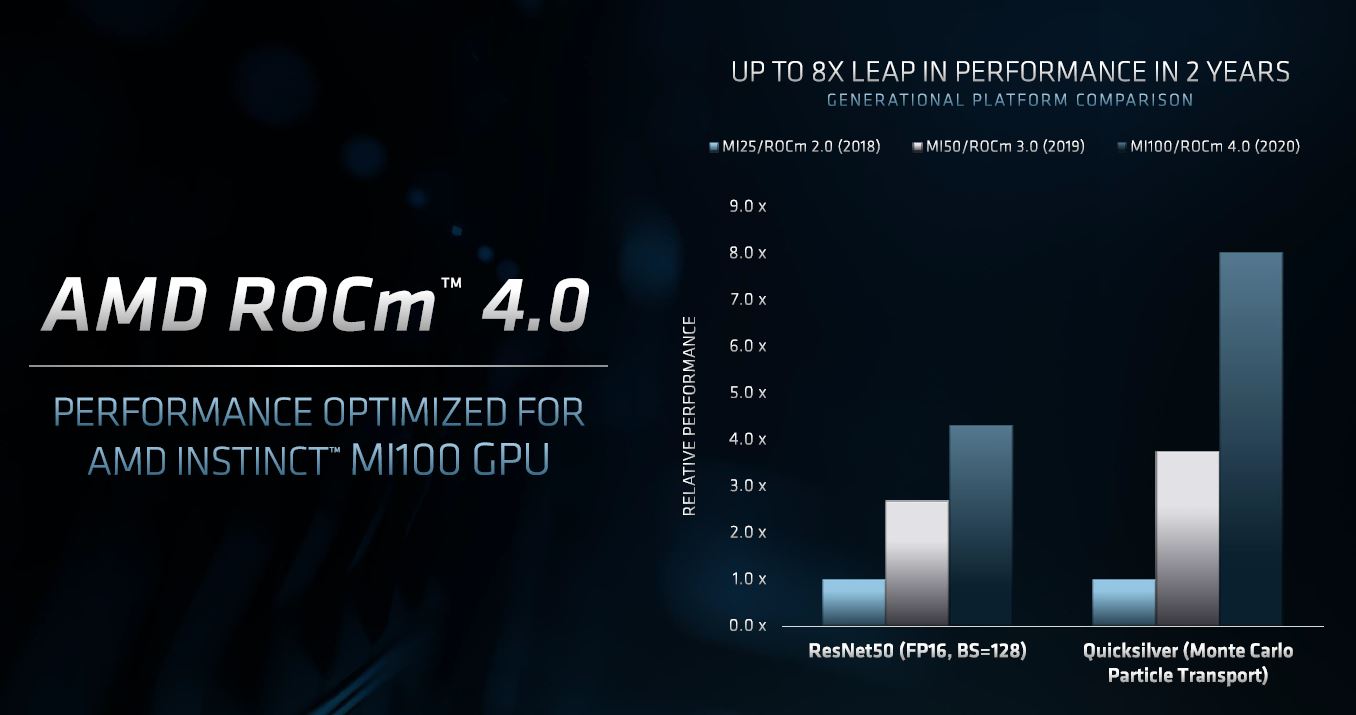

Significantly, with ROCm 4.5 release in November 2021, concurrent with the preview of the AMD Instinct MI200 series accelerator s that are being deployed in the 1.5 exaflops “Frontier” supercomputer at Oak Ridge National Laboratory, the first US system to break through the exaflops barrier at 64-bit floating point precision, has unified memory support between CPUs and GPUs. AMD has a long history in traditional high performance computing and was only able to take down exascale-class systems because of its commitment to enhance ROCm to become a full and complete stack for both HPC and AI workloads, something that arguably happened in 2020 when ROCm 4.0 was delivered. The ROCm stack has been maturing at an accelerating pace, right alongside the ever-improving AMD Instinct GPU compute hardware coming out of AMD, starting out with common math libraries used in HPC and AI plus the OpenCL effort for running C and C++ across heterogeneous compute engines that originally came out of Apple and which became a standard in 2008.Ĭreating a platform is always a journey, and it is one that AMD has been on since it first put its weight behind the OpenCL approach to creating heterogeneous applications and then started Project Boltzmann in 2015 to create a broader platform that could compete against Nvidia’s CUDA.ĪMD focused on the AI opportunity with ROCm first, and the ROCm 2.0 stack from 2018 was aimed predominantly at machine learning applications. The ROCm stack also supports OpenMP for C and C++ programming on multithreaded CPUs and GPUs, and similarly, the GPU runtime for OpenMP is included in ROCm and the CPU compiler and runtime is available on GitHub. The GPU runtimes are included in the ROCm stack, which is open source and available through AMD and on GitHub, and the CPU runtimes are available as open source on GitHub, too. Project Boltzmann was able to create C++ code that could be offloaded to AMD GPUs but also to Nvidia GPUs through the Heterogeneous Interface for Portability, or HIP, API, which is a CUDA-like API that allows for runtimes to target a variety of CPUs as well as Nvidia and AMD GPUs. ROCm was first unveiled as “Project Boltzmann” back at the SC15 supercomputing conference in November 2015 as a means of providing an open source alternative to Nvidia’s closed source CUDA stack. AMD had been working on heterogeneous CPU-GPU computing for many years, including its Heterogeneous System Architecture, when Nvidia suddenly took the HPC market by storm with its CUDA software stack in 2008 and then rode the shockwave of the AI explosion in 2012, expanding CUDA to cover machine learning training and inference workloads in addition to HPC simulation and modeling. The software stack is always the hardest part of the platform to develop, and ROCm is no exception in this regard. The added twist, ROCm can also deploy GPU code for Nvidia accelerators and any other accelerators that support C++. We think it is a platform that now can compete head-to-head with Nvidia’s hardware plus its CUDA stack and Intel’s hardware plus its oneAPI stack. Just like a tree falling in the woods makes no noise because there is no person to hear it, great hardware and great software together are not a credible platform until a critical mass of people adopt those wares.īy this definition, the AMD ROCm open software stack is what turns a server or cluster of servers that employ its AMD EPYC CPUs (or those of others) and some of its Radeon and AMD Instinct accelerators into a true platform. The third thing that is required to make a compute platform is an ecosystem of developers who create applications that run on the combined hardware and software. The latter enables companies to put what the developers have created on their laptops and across their proof of concept systems into production at scale. And when it comes to deploying the platform in production environments, the software stack must have enterprise-grade technical support and a reasonable pricing model. In terms of speed of development and adoption, it helps for this software layer to be open source, but that is not a requirement for success.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed